Assessments

Research & Design

EduMe

Intro

EduMe is a Workforce Success platform. A tool for delivering content to a desk-less workforce. EduMe empowers its users to create and deliver training content to their workers’ devices, wherever they are. EduMe excels in its delivery and because of this is currently used by industry leaders in on-demand such as Uber and Deliveroo.

EduMe’s sales team were struggling to convert trials to contracts. They kept coming up against the same feedback, we didn’t offer customers a way to assess their workers’ knowledge on a topic.

Joining Product Demos

I kicked the project off by joining members of the sales team on their demo calls.

Towards the start of the calls I joined, every prospect asked if there was a way for them to test their workers. Our sales team would always respond with no and tell them our question scores are not fit for purpose. They would tell them they are not the right tool to identify knowledge gaps in the workforce or the individual.

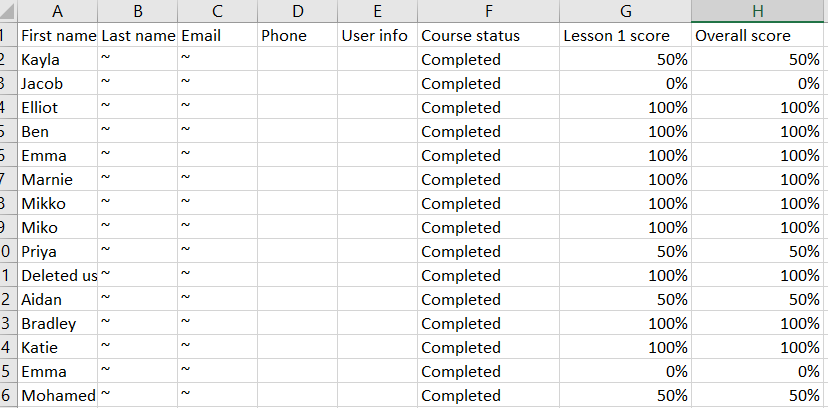

Later in our demos the sales team would demo our downloadable CSV reports. As soon as prospects saw this their eyes would light up and and they’d start asking questions again about testing their workers.

When prompted they said what they were looking for in a tool like ours was a spreadsheet they could comfortably use as an indicator of what individual workers know.

The sales team would repeat the same lines as before, that you can’t use our tool to test knowledge, it doesn’t tell you what workers know.

I asked the sales team why they felt this way and they mentioned:

- Scores are not right due to the interaction (selecting answers until a correct answer is chosen).

- Scores are not right as the user isn’t told they’re being evaluated.

Hypothesis When using our lessons to test workers knowledge on a topic the output was right but the input was wrong.

Understanding Existing Lesson Scores

Following on from my calls with prospects and after seeing how the sales team saw our lesson scores I became worried that we as a company didn’t understand our product.

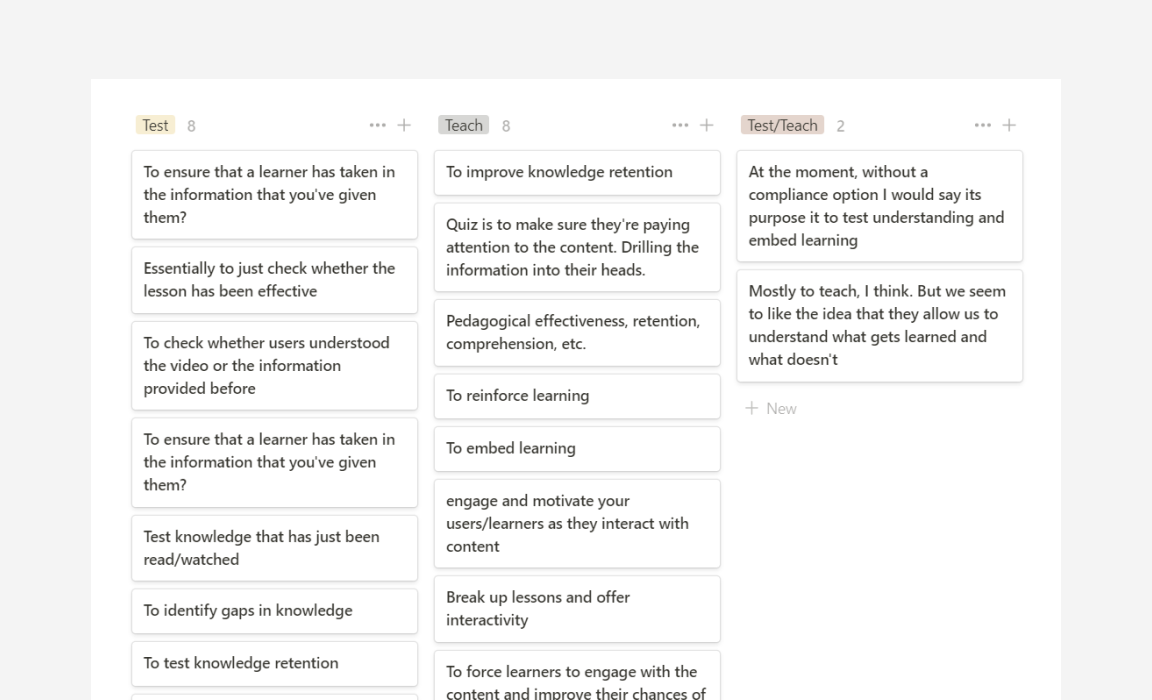

I surveyed 22 employess at EduMe + 5 of our larges clients and asked what they thought the purpose of a question in an EduMe lesson was.

The responses varied from teaching to testing (or some combination of the two). It was clear that we needed to make a decision on the purpose of questions in EduMe lessons.

Depth Interviews

I carried out a handful of interviews with existing customers to validate findings so far and to get a better understand how they were using our lessons, what their training requirements were and how we were meeting them.

I discovered clients had opted to use 3rd party tools such as Typeform to run manual tests after delivering EduMe content, this felt like a massive opportunity for the business.

Requirements

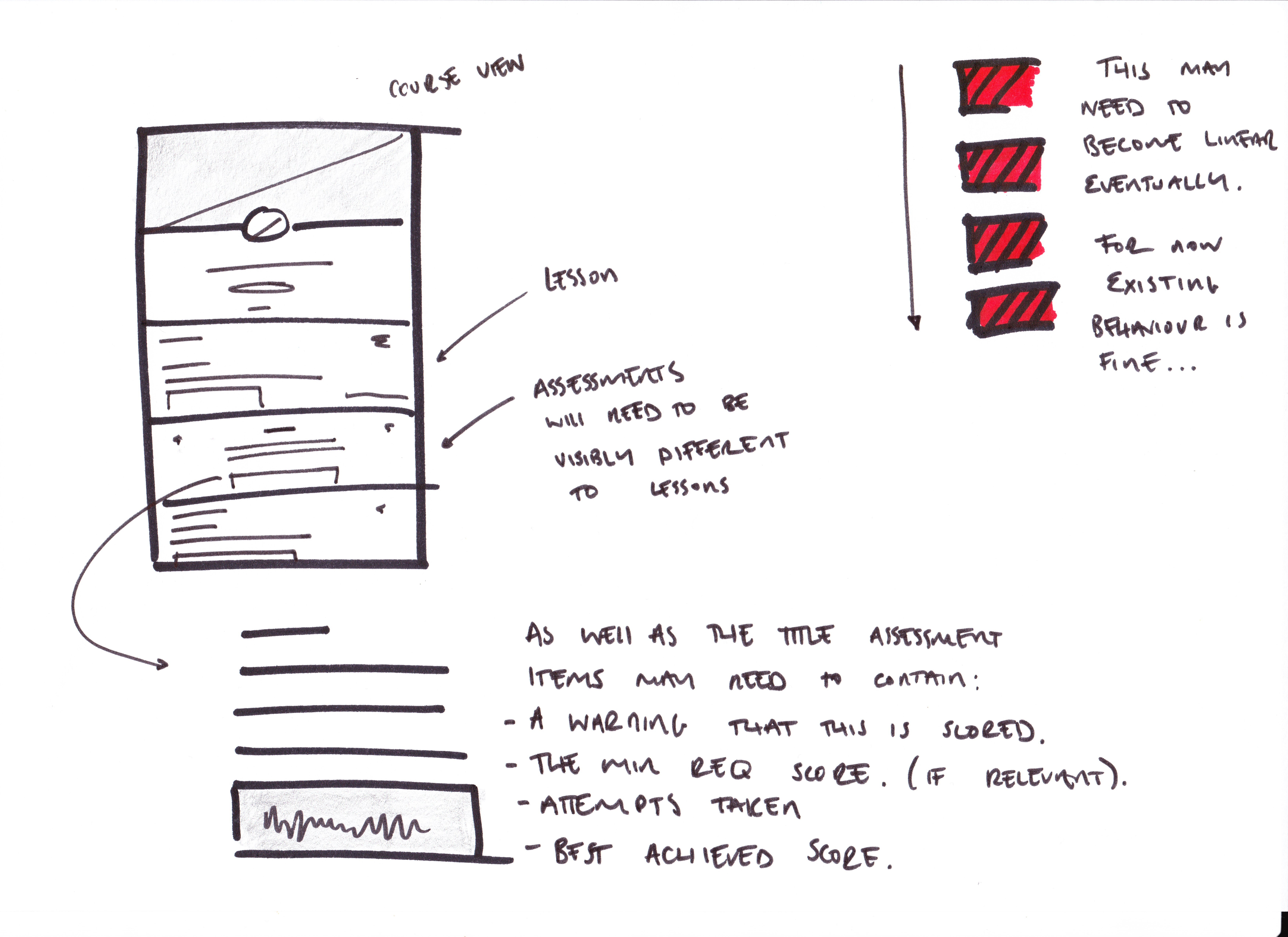

Based on my findings I proposed we introduced a new type of scored activitie, specifically for testing workers knowledge. I presented my research to key stakeholders and ran a session to define a list of requirements:

- A worker needs to know their performance is being scored

- A user can see an individual worker’s score

- A worker should only be able to choose one answer to each question and not keep picking answers until they get it right

- We need to be comfortable telling customers that they can use this to test their workers’ knowledge

- We need to be comfortable telling customers that our lessons are for teaching

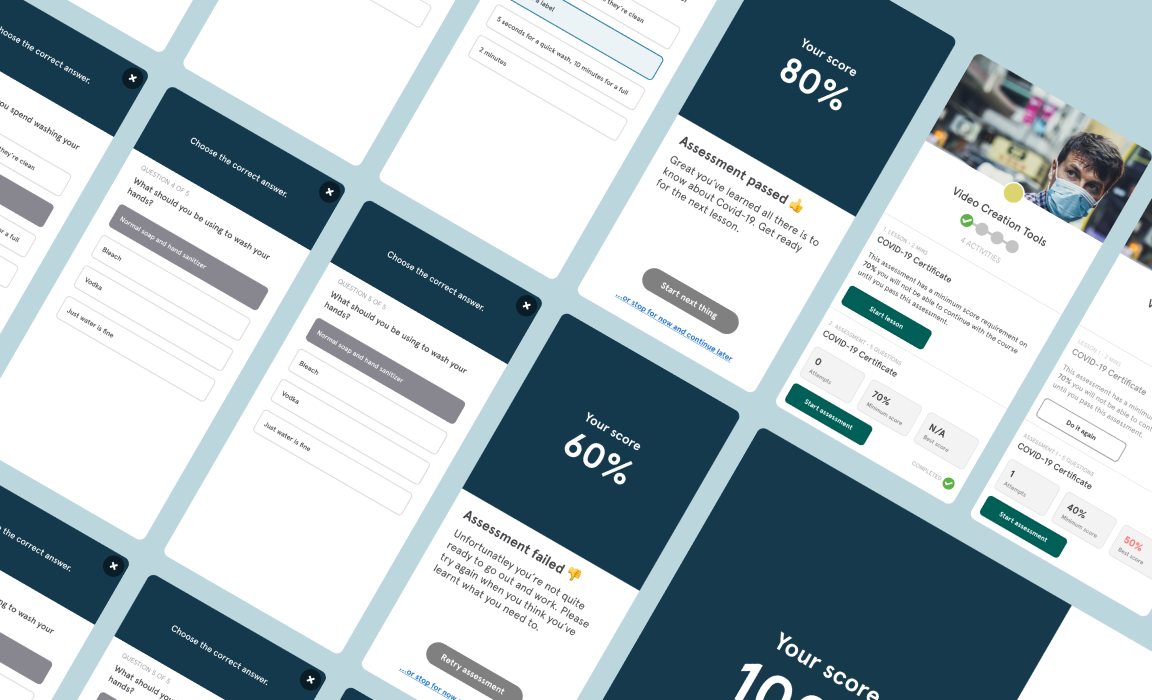

Wireframing, Prototyping & Delivery

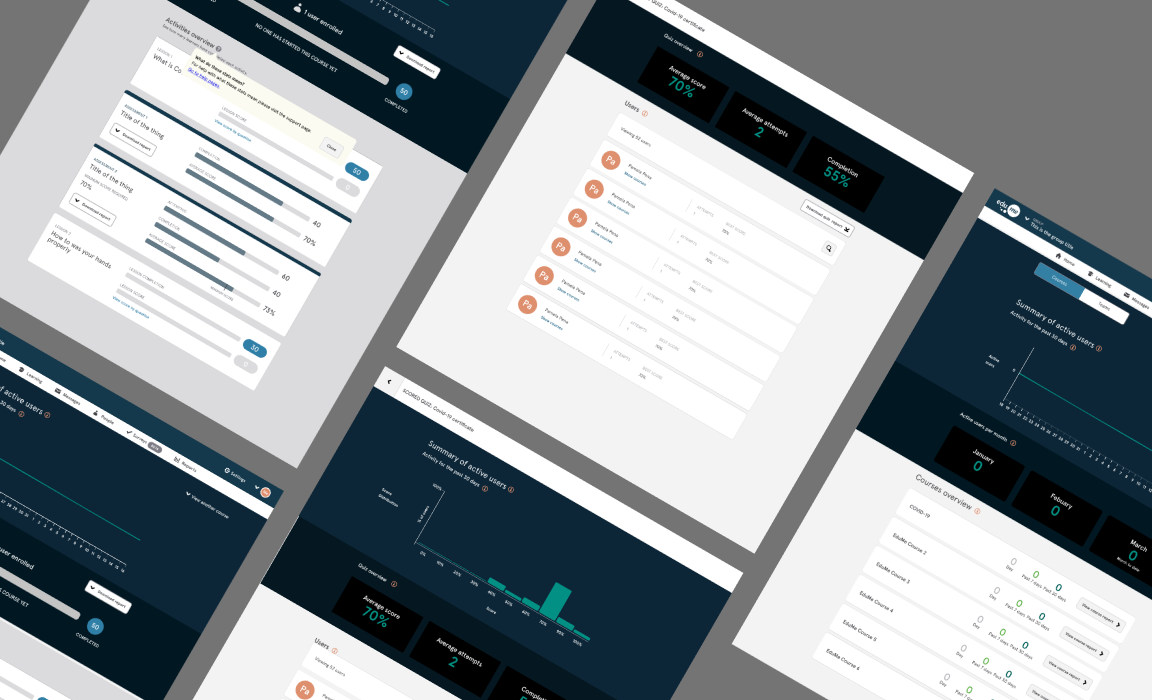

Working collaboratively with developers and our customer success team I sketched out multiple iterations of an assessments feature and prototyped the interactions.

Based on prospects’ reaction to our downloadable CSV I understood what they wanted to see as an output here so started with reporting and worked backwards.

Outcome

It’s early days so there’s no data yet. Here’s the client feedback so far:

♥ this! So helpful, automates our process & saves lots of admin time

Trainers will be very happy

Every week technicians are asking for this - key for us is exposing the score to users in real time (So much time saved here)